Licenses and patents

Results and software licenses available at the faculty may be provided as needed. This way of cooperation is suitable for development of more complex solutions with guaranteed solution time and with higher financial demands. If you are interested, please send us a professional area including a brief description of the problem and the desired results. This information will not be disseminated in any way and will serve to select competent experts for further cooperation discussions.

sAOTS - Development of the sAOTS system began in 2009 as part of the Research and development of technologies for intelligent optical tracking systems project, funded by the Czech Ministry of Industry and Trade. Our team contributed to creating a single-camera solution that can track distant and low-visibility targets. The system enables real-time monitoring of objects located several kilometers away and can track up to 50 targets simultaneously while designating one as the primary target.

Building on this foundation, we later developed an advanced version of the system. This version features a 22x zoom camera mounted on a military-grade manipulator, which significantly enhances the system’s versatility and range. The result was the sAOTS (Semi-Automated Object Tracking System).

In 2025, we began researching ways to modernize the sAOTS, focusing on next-generation surveillance capabilities. The current development aims to integrate edge computing and high-speed image processing to improve the system's performance, efficiency, and responsiveness in real time for critical security and defense applications.

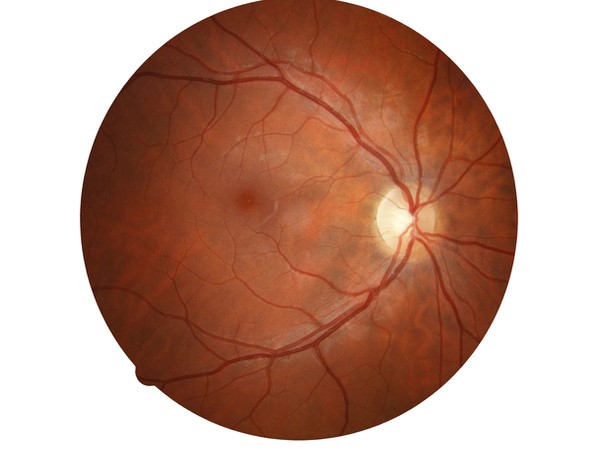

This software application could be used for processing of eye retina images. In the image of eye retina, there is searched for vein pattern, the veins are detected and the features are extracted for the generation of a biometric template, which could be used for recognition of people on the basis of their eye retina vein pattern.

CGPAnalyzer

CGPAnalyzer was developed to analyse and visualise a genetic record (i.e. a log file) generated by CGP-based circuit design software. CGPAnalyzer automatically finds key genetic improvements in the genetic record and presents relevant phenotypes. The comparison module of CGPAnalyzer allows the user to select two phenotypes and compare their structure, history and functionality. It thus enables to reconstruct the process of discovering new circuit designs. This feature is demonstrated by means of the analysis of the genetic record from a 9-parity circuit evolution. The CGPAnalyzer tool is a desktop application with a graphical user interface created using Java v.8 and Swing library.